Opinion: Benchmarking in Precision Agriculture is Big Statistics

Benchmarking has become a buzzword in precision agriculture recently and before we get too excited with all of the profound insight it’s going to bestow upon us, let’s take a step back and really look at where this benchmarking stuff comes from, what it’s based on, and how it’s impacting the precision ag space.

The first step for these companies that offer benchmarking is to gather a lot of data from a lot of growers and before long they’ve got some Big Data to work with. With this Big Data pool, they can make comparisons of farms with similar hybrids planted on similar soil types. From there a grower can compare how he is yielding compared to his peers farming the same type of soil and planting similar seed.

I didn’t know what to make of this “benchmarking”, so a colleague of mine and I decided to test it out to see if there was something to this. My colleague’s dad farms a few hundred acres and collects yield data, but does nothing with it. And by nothing, I mean he doesn’t calibrate his monitor or even unload the data off his card.

This spring his dad’s card had filled up and he needed to free up some space so his monitor would continue to function. So Instead of erasing the multiple years of uncalibrated, low quality yield data we decided to use it to test out a leading company in the precision ag benchmarking space.

MORE BY BEN D. JOHNSON

Opinion: How to Leverage Precision Agriculture to Launch Your Aggressive, High-Pressure Telemarketing Business

My colleague submitted several years’ worth of his dad’s data. A brief time later, we got a lengthy report back that showed how his dad’s farm stacked up to his neighbors in the same county and a bunch of other pages of fancy graphs which included:

• Yield distribution

• Yield by soil type

• Yield by elevation

• Cumulative rainfall and GDUs during the growing season

• Average rainfall and soil moisture by week

• Daily air temperature

• Evapotranspiration

• Soil temperature at the beginning of the season

These graphs didn’t really seem necessarily useful for specific improvements a grower could make to his operation, but they sure were fancy! The reports also went on to tell us that my colleague’s farm was below the bottom 25% of farms in our county in terms of yield. As someone who looks at a lot of yield data from our county, that did not sit well with me.

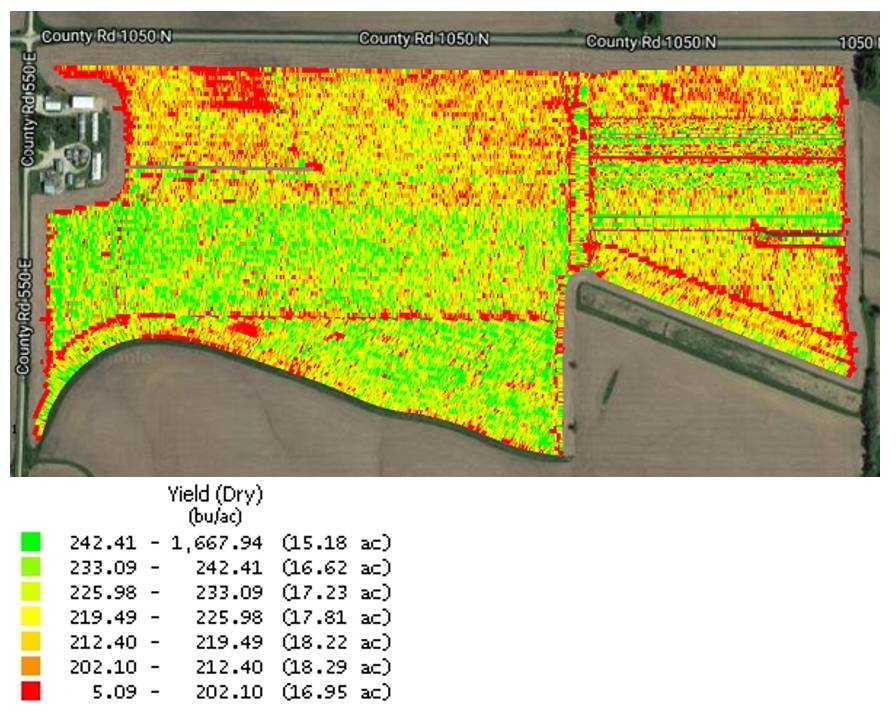

Another thing that did not sit well with me was that we didn’t get a single comment from this leading benchmarking company about the poor quality of the yield data. Take a look for yourself at one of the yield maps we submitted:

Notice not only the missing endrows but also the interesting yield ranges in the key.

Most people would be concerned about this, but as a precision ag professional, I know that benchmarking relies heavily on some really advanced data science methods I refer to as Big Statistics (BS for short) to fill in the gaps on highly questionable data like what we submitted.

We went back and looked at the data that this benchmarking company made those fancy graphs from. We determined that it was not fluff like we originally thought, but rather it was BS. So I guess one could say that benchmarking in precision ag doesn’t really work without the heavy use of BS.

In all seriousness, if I valued the idea of benchmarking and wanted to build out a benchmarking database, I would look at more than just yield and soil maps to “benchmark” my farm. In fact, step 1 might be to update the soil maps (that were drawn by hand decades ago) to something collected with sensors (like I wrote about before here) such as Veris data or even ERU layers. Next, I would collect and consider other data layers and variables besides yield such as:

• Soil test data

• As applied data for crop nutrients and lime

• Planting dates

• Seeding rates

• Crop rotation

• Tillage system

• Weather

• Slope and drainage

• Chemical programs

• Profitability (I’m told this is kinda important)

But I could see how a company could overlook those factors in their rush to the market place. Being fancy is really important in precision ag, however being first out of the gate is top dog.

But what about being accurate and effective? Nahhh, nobody cares about that, especially when your product is fancy looking AND first!

Ok, I’ll put down my cup of Haterade now and I’ll state that I’m not against innovative technology. I am just heavily in favor of good, solid science in any technology I associate myself with, no matter how impressive the smoke and mirrors are of technologies that don’t meet that standard.

What concerns me most is that this benchmarking nonsense is going to fall flat on its face once growers figure out what’s going on when it fails to deliver anything of sustenance. This only leaves a bad taste in everyone’s mouth and damages the progress that the good advanced analytic programs have made. This will make our industry take a few steps backwards at a time when we should be taking several leaps forward.

So, thank you for that, benchmarking. Thanks a lot…

And when a company that promotes advanced analytics like benchmarking as their bread and butter and then starts wholesaling chemicals and other farm-related products at rock bottom prices, you have to wonder what their real motives are. Since this arm of their business is so far out in left field from their roots in benchmarking, I’m going to have to cover what it means for the industry in another article.

Stay tuned…