Betting on Rain? The Accuracy and Reliability of Precipitation Forecasts

For you sports junkies out there, if I could prove to you that I can correctly predict winners and losers with 80% accuracy, would you use my advice in Las Vegas? I don’t gamble, but according to this article on sports forecasting that summarized the results of professional research, the best methods provided only 54% accuracy. Yet, even with what one might think is an accuracy not much better than a coin flip, hypothetical bets using this model would have provided 16% returns.

Now, what if I told you that professional weather forecasts can correctly predict whether rain will fall on your specific field within the next three days 80% of the time and with almost 70% accuracy out to nine days? Considering how many risks we take on things that are less predictable than the weather, whether it be the stock market, horses, or sports, I posit that there is untapped potential in the proper use of weather forecasts to increase efficiency or productivity, and ultimately improve margins. In the case of useful rainfall predictions, there is potential to reduce risk of over-watering or mistiming a rain-sensitive product application or a host of other operations that can affect yield or operational efficiency.

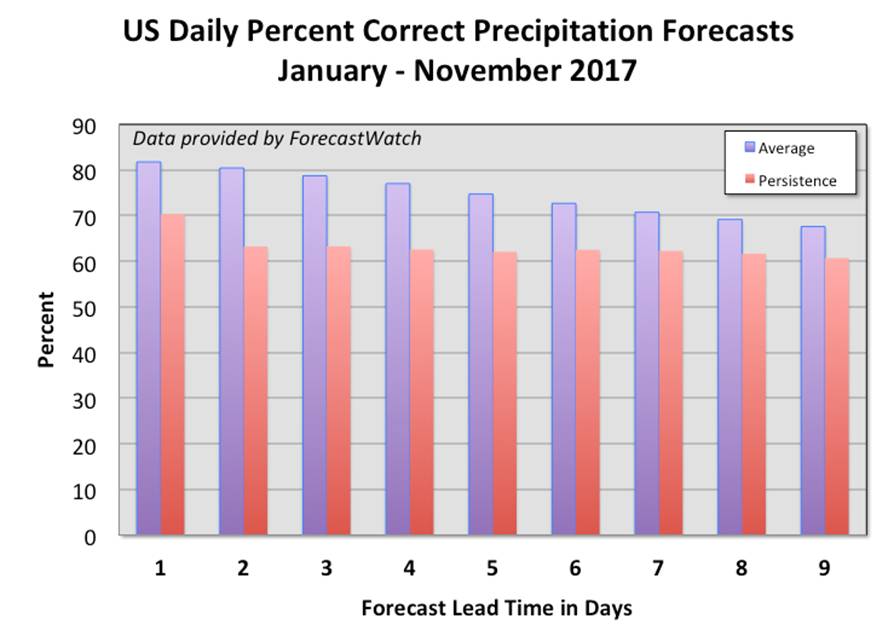

Like my last article where I used independent forecast accuracy assessment from ForecastWatch to demonstrate that location-specific temperature forecasts are skillful out to around 10 days into the future, this month we use their service to demonstrate the accuracy of rainfall predictions. The data discussed here is based on an average of many different weather service providers’ daily nine-day forecasts collected each day for about 750 U.S. locations during January through November of 2017. These are not “area” forecasts where a successful forecast only means rain fell in a larger area. Rather, these are point-to-point comparisons.

For the forecasts to be considered skillful, they have to be better than forecasts created using the “persistence” technique. A persistence forecast is one that assumes the conditions occurring at the time of the forecast will continue through the forecast period. So, if it is raining at the time of the forecast, a nine-day forecast would assume that each of the subsequent nine days would have rain. Such a forecast requires no special knowledge or skill to create.

MORE BY BRENT SHAW

The graph below shows the average percentage of correct precipitation forecasts for all of the weather services as a function of lead time in days compared to the persistence forecasts. Indeed, the forecast services are around 80% accurate for the first three days and tail off to just under 70% by day nine, and for all days, the forecasts are better than persistence. You might be surprised, though, that the persistence forecasts are 60-70% accurate as well. This is because precipitation events are relatively infrequent when you consider the entire U.S. over nearly a full year, occurring only about 30% of the total time. These overall accuracy numbers include all of the cases where no rain was forecast nor occurred. If you simply forecast dry conditions every day for all the locations, you would still be correct almost 70% of the time.

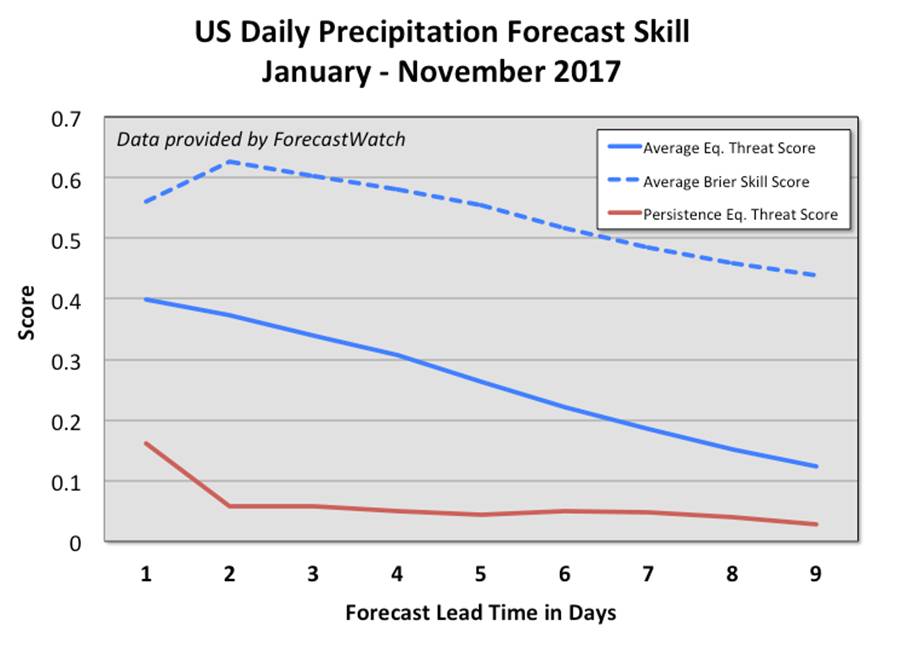

Thus, the value of precipitation forecasts are in those situations where rain is possible. We can use different metrics that focus more on the more difficult, non-dry events that cannot rely on random guessing and still do well. In the following graph, the solid lines show such a metric called the Equitable Threat Score (ETS) for the average of the providers (solid blue) versus persistence (solid red). Perfect forecasts have a value of 1.0, and 0.0 means no skill. Professional service providers on average provide much more skillful forecasts than persistence. In other words, you would do much better betting on one of these forecasts rather than basing future actions only on how much rain has fallen.

For example, rather than irrigating only because you are approaching an inferred deficit of available water content computed from current and past evapotranspiration estimates, rain, and irrigation activities, you can choose to hold off based on a forecast of rain in the next few days, and more often than not this will be the correct decision. This would be important for crops that are sensitive to over-watering, such as soybeans in their vegetative stage where too much moisture can lead to disease as well as yield reductions.

Now, there is one more dashed line on the skill graph that depicts a metric called the Brier Skill Score (BSS). Like ETS, a perfect BSS is 1.0. However, BSS is a measure of how reliable the probability forecasts are. For perfectly reliable (BSS = 1.0) probability forecasts, the number of rainfall occurrences for a large set of forecasts having the same probability are equal. In other words, rain actually fell for 20% of all the forecasts having a 20% chance of rain. Likewise, rain occurs 80% of time for all forecasts of an 80% chance of rain.

Having reliable probabilities means decision support tools can use objective cost-loss modeling to achieve an appropriate level of risk applicable to the potential savings or gains. We are now reaching a point where some providers are able to provide objective, reliable, and calibrated probability forecasts that will enable cost-loss optimization in automated decision support tools. Because of the discrete nature of rainfall, especially in showery situations, there will be times the forecast misses, just like a sports bookie. But a disciplined approach based on objective probabilistic rainfall forecasts will yield more wins than losses, and the agtech community needs to increase reliance on near-term weather forecasts that are professionally generated and designed for agronomic decision making.