Agricultural Drones: From Detection to Diagnosis

Data capture is near real-time and on demand with agricultural drones.

There is not a remote sensing platform that is innately better at all things: satellites, manned aircraft, and unmanned aerial vehicles (UAVs) all fill a unique niche where they excel.

Satellite data acquisition is nearly passive and allows for comparison of long-term trends. Manned aircraft are very cost-effective for larger acreages. UAVs (let’s just call them drones, shall we?) have as their key advantages:

- data capture is near real-time and on demand

- imagery can be extremely high resolution

- newest-generation sensors can be deployed almost immediately.

It is these benefits that will enable drones in coming years to go from mid-altitude crop health mapping tools to being low-altitude diagnostic powerhouses.

Real-time, On-Demand

Topography, soil, water and management history are crucial datasets for crop decision-making. Imagery often plays only a minor supporting role in those decisions. But in those instances when imagery is crucial for crop management, it needs to provide information that can be acted on quickly. While satellite imagery has been improving, with companies putting dozens of satellites into space to increase imaging frequency, they still cannot provide actionable data within a day.

Often, satellite frequency is enough for early-season fertilizer decisions, for example. But in regions with cloud or smoke cover during the summer, even that frequency will sometimes be insufficient for simple applications like variable-rate fungicide. Drones, on the other hand, can acquire data almost on demand, if and when it is required for farm management decisions. Most systems are already able to produce a map within hours of UAV flight — in some cases such as the Slantrange system reducing that further to a matter of minutes. This ability to not only capture data on demand, but also turn it into action almost immediately gives drones a unique niche in mid-season precision agriculture.

Extreme Resolution

Currently, most agricultural drones are being flown autonomously at 300’ to 400’ altitude to generate an efficient map of the entire field, with very impressive-sounding metrics. The most common measure of remote sensing imagery resolution is GSD (ground sample distance, or the distance between pixel centers measured on the ground). Most small drones today are producing pixels in the range of 2 to 5 cm from those altitudes.

Most agricultural drones are being flown autonomously at 300’ to 400’ altitude to generate an efficient map of the entire field.

However, when a full-field census is required, much higher efficiency can be obtained by manned aircraft or UAVs flying beyond visual line of sight at thousands of feet above ground. The sweet spot for small UAVs, on the other hand, is actually below 50 feet, where its sensors can show you the margins of leaves and whether there are auricles clasping the stem. The units for resolution in these cases of proximal imagery change from cm/pixel to pixel/cm.

When is the last time you’ve diagnosed a specific disease from the road or estimated fertilizer need from a quick glance at your wheat? Simple patterns and average light reflectance of a crop can give a grower a sense of average health of a crop. But when it comes to really figuring out what is limiting our crop’s growth, we all scuff aside a little soil or pull some seedlings for closer inspection. The equivalent for drones will be diagnostic sensing systems that can recognize the smallest details of each part of a plant rather than average reflectance values from the crop canopy.

Machine Learning: The Secret Sauce

Ag drones are already the result of aeronautics, robotics, optics, remote sensing, agronomy and many other disciplines required to generate such complex sensing machines. In the past few years, we have added to this the ability to use near-infrared light (in NIR-converted cameras) and red-edge light (in multispectral systems). This has made it possible for drones to already see more than the human eye can see.

But real disruption will only come from machine learning, especially when combined with extremely high resolution spectral data. This computing discipline (also called artificial intelligence or deep learning) will enable the seemingly magical interpretation of complex datasets using millions of training data points.

Markus Weber is Co-Founder of LandView.com, a reseller of complete DIY systems for farmers and agronomists.

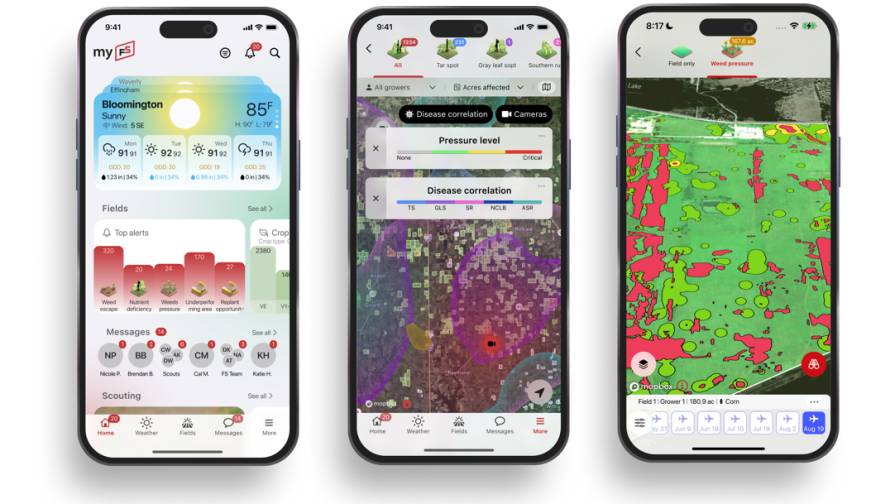

The closest analogy is facial recognition software, which today spits out the answer “Bob Smith” rather than “greying hair, wide eyes, bushy eyebrows, double chin.” Likewise, the drones of tomorrow will generate maps of “wild oats” or “fusarium” rather than the vegetative indexes of today, which show general crop health or stress. Drones will show us what the problem is, rather than where there might be a problem.

Value Today

Does anyone really regret buying a smartphone before facial recognition could turn your face into an animated emoji? Not likely, because it still serves as a research tool, a measurement device, and even as a phone.

Likewise, I have yet to find an end user that regrets purchasing a drone. Some admittedly get more value than others by using them to their fullest extent. But all are using their creativity and inevitably make great use of their UAV in a way that suits their operation, whether simply as a crop-scouting “eye in the sky,” as a “cow finder,” or as a management zone creation tool.

Rather than wait for the diagnostic solution, endusers today already see incredible value in having a drone point out where there might be a crop health issue. We typically only fix things once we know they are broken — and more than anything, drones help advisers discover problems that they didn’t know they had. This allows them to do what they are best at: solving problems.