Mythbusting: Is Weather More Unpredictable Due to a Changing Climate?

There are some commonly spoken narratives that are actually false, at least as stated in the exact words used, that erode trust in weather forecasting. For example, just recently a friend mentioned how “it seems clear, at least to the layperson, that variability due to climate change is making weather more unpredictable, making weather information more valuable.”

Another friend nodded in agreement, noting that she had heard her state of North Carolina had experienced two “500-year floods” in the past two years. I’ll explain in this month’s article why these statements are inaccurate, but in the moment I could barely contain myself. Since my friends are both agriculture experts, I countered with, “It seems obvious that health issues are on the rise due to GMO crops.” Of course, I didn’t believe that, but I felt good about getting the same visceral reaction from them as they caused in me.

The truth is that weather is not getting harder to predict. As I discussed in a previous blog post, our ability to forecast weather, especially within a few days to a couple of weeks, is actually quite good and worth betting on for many decisions, whether for life or death, or for determining when to plant, spray, irrigate, or harvest. You can find plenty of reports that talk about this. For example, this 2015 paper by the Royal Meteorological Society found that the five to seven-day forecasts are as accurate as the one-day forecasts from 50 years ago, and that eight to 10-day forecasts were as accurate as five to seven-day forecasts from 15 years prior. An often quoted statistic is that the accuracy of our numerical weather prediction models advance by about one day of accuracy every 10 years, and this is based on long-term statistics from the European Centre for Medium Range Forecasts.

Furthermore, there is no scientific basis to assume that more variability in long-term weather patterns should have any impact on our ability to forecast weather in these usable time frames. This is because the near-term, actionable weather forecasts are primarily not based on statistical models using past data, but rather numerical weather prediction models that apply physical equations of motion and thermodynamics to the current state of the atmosphere as observed, regardless of whether the current state is extreme at a location or not.

MORE BY BRENT SHAW

Rebounding Spring for 2018 Planting: Cold April Weather Finally Gives Way to Promising May Forecast

So, whether or not they are due to climate change, even the most extreme events are readily captured and forecast by the models days or weeks in advance. In fact, this is really where the numerical models shine, and sometimes the human meteorologists using the models may be less likely to issue a resulting forecast that is record breaking, thinking perhaps the models are not quite right when in fact it turns out to be pretty accurate. So, weather data is becoming more valuable because it is getting more accurate, not because climate change is making weather more unpredictable.

To be fair, I have heard reasonable arguments from colleagues who are involved in developing long-range (seasonal to annual) forecasts that feel shifts in our longer-term patterns are making those forecasts more difficult. However, in another previous article, I pointed out they are too broad and too inaccurate now to be of any value in precision agriculture decision making, whether or not they are becoming more difficult to make.

Likewise, how scientific metrics get summarized for tweetable content often leads to more confusion and false beliefs. My friend’s concern that two 500-year flood events in two years is an indication of something amiss in our atmosphere is a great example of a complex statistic being interpreted too literally. On the surface, that does sound troubling, and it leads people to believe we have been able to count such things for thousands of years to come up with these facts. Yet, this is not a statistic that is based on thousands of years of actual observations. (Side note: it would be interesting to look back and see how many observations of rain existed in North Carolina just 200 years ago!) Rather, such metrics are based on statistical analysis using more recent history and then extrapolating the expected average time between occurrences. But, it is not intended to mean that it would be abnormal to have more than one such event in a much shorter time span, any more than it is not unusual for a baseball player that is batting .200 to suddenly go on a big hitting streak, or to flip a coin several times and get heads multiple times in a row for a bit.

As I write this, residents of the Florida Panhandle are in the immediate aftermath of devastating Hurricane Michael, and the people of the Carolinas are still recovering from last month’s massive rains from Hurricane Florence (one of the two “500-year flood events” discussed above). These areas need our physical and financial assistance, and in the forefront of our thoughts and prayers. The cleanup will be long and grueling, and the effects will remain for years to come.

But, there is a shining light in these tragedies in that they further illustrate my point about weather forecasting continually improving and being useful for decision making. These storms were accurately forecast well in advance. Combined with our modern, multi-channel communications technology, warnings were given and the vast majority of the population heeded them. Meteorologist Mike Smith wrote an excellent article that compares the loss of lives in Michael to the 1900 Galveston hurricane, estimated to have also been a strong Category 4 storm. There were 8,000 people killed, or 19% of Galveston’s population, in 1900. The population of the Panama City to Mexico Beach area is about 18,000. An equivalent percentage for Michael would have yielded a death toll of 3,400. Remarkably, as of time of writing, the death toll from Michael stands at 18, and the combined death toll from Florence and Michael is around 70.

Hurricane intensity and track are one of the more challenging forecast problems in meteorology, because they form and thrive in an environment where upper-level winds that steer weather systems are very weak. Yet, in recent decades, nobody has really been caught by surprise with short notice impacts of a landfalling storm in the US. Florence was a particularly shining example.

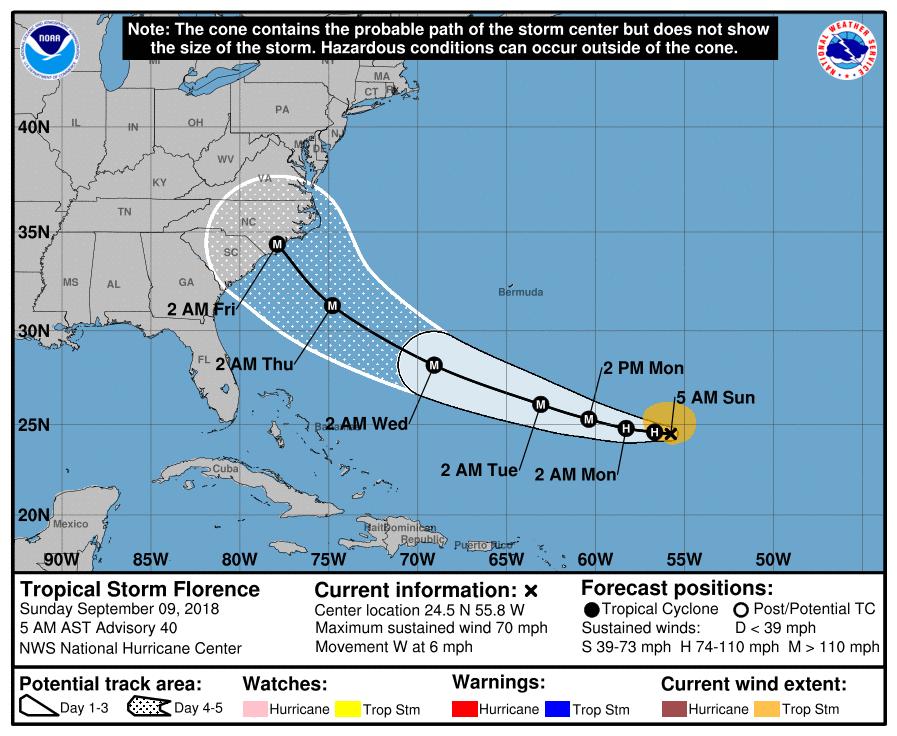

The National Hurricane Center (NHC) started tracking it and issuing routine discussions on August 30. As can be seen in the graphic below from the NHC archive, by Sunday, September 9, NHC was forecasting Florence to be a major hurricane making landfall early morning on Friday, September 14. That ended up being nearly a perfect forecast, five days in advance. Hurricane watches, spanning the North and South Carolina coasts, were issued early on September 11 and upgraded to hurricane warnings later that same day, nearly three full days before landfall. By this time, NWS, private companies and media outlets were consistently providing the message that the potential for major flooding rains due to slow inland movement were becoming a possibility.

Certainly, there is room for improvement, and there will always be some good forecasts and some blown forecasts.

But, we have to be willing to use the information properly. In his book, The Signal and the Noise: Why Some Predictions Fail-But Some Don’t, Nate Silver says this about weather forecasting in a discussion about the failure of the New Orleans mayor to heed warnings and take appropriate action in advance of Hurricane Katrina:

Weather forecasting is one of the success stories of this book, a case of man and machine joining forces to understand and sometimes anticipate the complexities of nature. That we can sometimes predict nature’s course, however, does not mean we can alter it. Nor does a forecast do much good if there is no one willing to listen to it.

I will continue beating my meteorological drum: it’s time for us as a community to really invest in applying predictive weather information. And furthermore, we shouldn’t just be grabbing 10-day forecasts of high and low temperature and rain, but actually combining these usable weather forecasts with soil and crop data to actually provide guidance on the real decisions being made: when can I plant, treat, irrigate, and harvest? This is how we will free up time for our agricultural brainpower to be spent on things that cannot yet be done in an automated fashion. Rather than avoid the use of predictive weather and soil-based analytics due to commonly held myths about weather forecasting, let’s let the value of weather forecasts stand on their own based on what the record shows.